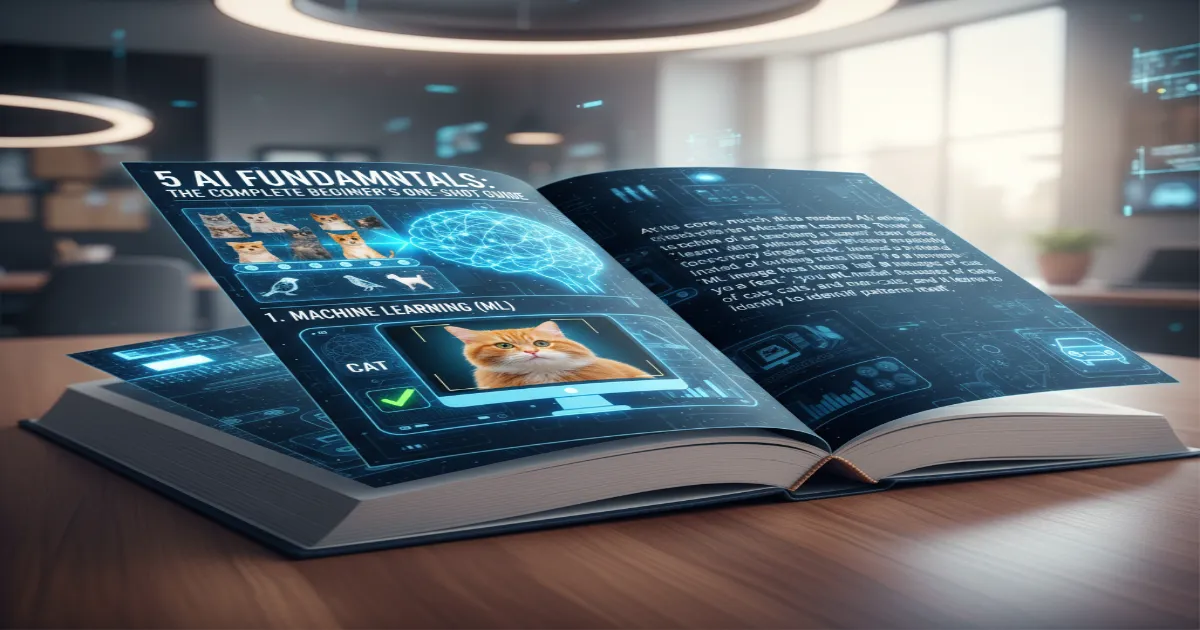

5 AI Fundamentals: The Complete Beginner’s One-Shot Guide +

5 AI Fundamentals: The Complete Beginner’s

Demystifying Artificial Intelligence: The Foundation of the Future

In an era where technology evolves at breakneck speed, Artificial Intelligence (AI) has transitioned from science fiction to an integral part of our daily existence. Whether it is unlocking your smartphone with Face ID, asking a virtual assistant for the weather forecast, or relying on curated recommendations on streaming platforms, AI is the silent engine powering these conveniences.

The video ‘AI Complete OneShot Course for Beginners’ by Apna College serves as a definitive primer for anyone looking to peel back the layers of this complex technology. This extensive guide extracts the core technical concepts, offering a structured pathway from basic definitions to advanced Generative AI applications, ensuring you understand the ‘how’ and ‘why’ behind the intelligent systems shaping 2026.

The core philosophy of the course is to democratize AI knowledge. It begins by shattering the intimidation barrier that often surrounds terms like ‘Neural Networks’ or ‘Reinforcement Learning.’ By grounding these concepts in real-world utility—such as how email filters block spam or how self-driving cars perceive the road—the abstract mathematics of AI becomes tangible.

This article mirrors that journey, breaking down the technical hierarchy of intelligence, exploring the paradigm shift in programming, and providing a concrete roadmap for aspiring AI engineers. We will delve deep into the mechanics of how machines learn, the architecture of deep learning models, and the revolutionary capabilities of Large Language Models (LLMs).

The Hierarchy of Intelligence: AI vs. ML vs. DL vs. GenAI

One of the most critical takeaways from the initial module is the distinction and relationship between the various buzzwords in the industry. Often used interchangeably, AI, Machine Learning (ML), Deep Learning (DL), and Generative AI (GenAI) actually represent a nested hierarchy of technologies. Visualizing this as a Venn diagram provides clarity: Artificial Intelligence is the overarching umbrella term. It encompasses any technique that enables computers to mimic human intelligence, including logic, if-then rules, and decision trees. It is the broad goal of simulating cognitive functions.

Nested within AI is Machine Learning. ML is a specific subset focused on statistical techniques that enable machines to improve at tasks with experience. Unlike broad AI, which might rely on hard-coded rules, ML systems dynamically learn from data. Further down the hierarchy lies Deep Learning, a specialized subset of ML inspired by the biological structure of the human brain. DL uses multi-layered neural networks to solve complex problems like image recognition and natural language processing.

Finally, the newest and most explosive subset is Generative AI. Situated within Deep Learning, GenAI focuses not just on analyzing existing data, but on creating new data—be it text, images, or code—that resembles the training set. Understanding this lineage is crucial for navigating the technical landscape.

The Paradigm Shift: Traditional Programming vs. Machine Learning

To truly grasp the power of Machine Learning, one must understand how it differs fundamentally from the traditional programming paradigm we have used for decades. In traditional software development, a programmer acts as the rule-maker. You provide the computer with two things: the input data and a specific set of rules (the algorithm). The computer processes this to produce an output. For example, to calculate a sum, you give the numbers (data) and the addition operator (rule), and the computer gives the result.

Machine Learning flips this equation on its head. In an ML workflow, you provide the computer with the input data and the desired output (the answers). The computer’s job is to figure out the rules that connect the two. This is a profound shift. For instance, instead of writing infinite ‘if’ statements to define what a cat looks like (e.g., ‘if pointy ears’, ‘if whiskers’), you feed the system thousands of images labeled ‘cat’ and thousands labeled ‘not cat.’

The system analyzes the pixel patterns and generates its own mathematical function—a model—that can identify a cat. This ability to derive rules from data allows ML to solve problems that are too complex for manual coding.

Three Pillars of Machine Learning

The course categorizes Machine Learning into three distinct types, each defined by the nature of the data and the feedback mechanism used during training. Understanding these three pillars is essential for selecting the right approach for a given problem.

1. Supervised Learning: The Guided Approach

Supervised Learning is the most common form of ML used in industry today. It operates on the principle of a teacher-student relationship. The ‘teacher’ provides the model with a dataset where the correct answers (labels) are already known. The model makes predictions on the data, compares its answers to the actual labels, and adjusts its internal parameters to minimize error. This category is further divided into two main tasks: Regression and Classification.

Regression involves predicting continuous numerical values, such as forecasting house prices based on square footage and location. Classification involves predicting discrete categories, such as determining whether an email is ‘Spam’ or ‘Ham,’ or identifying if a tumor is malignant or benign.

2. Unsupervised Learning: Discovering Hidden Patterns

In contrast, Unsupervised Learning deals with unlabeled data. There is no teacher, and the system is left to find structure within the data on its own. This approach is powerful for exploratory analysis where the outcome is unknown. The primary technique here is Clustering, which groups similar data points together. A classic real-world application is customer segmentation in marketing.

An algorithm can analyze purchasing history to group customers into distinct segments (e.g., ‘budget shoppers,’ ‘tech enthusiasts’) without being told what those segments are in advance. Another technique is Dimensionality Reduction, used to simplify complex datasets while retaining meaningful information.

3. Reinforcement Learning: Learning by Trial and Error

Reinforcement Learning (RL) is perhaps the most dynamic type of ML. It is based on the psychological concept of conditioning. An agent interacts with an environment and learns to make decisions by performing actions and receiving feedback in the form of rewards or penalties. The goal is to maximize the cumulative reward over time.

This is the technology behind mastering complex games like Chess and Go, where the agent learns strategies not by studying databases of moves, but by playing millions of games against itself. It is also the foundation of robotics, allowing robots to learn how to walk or grasp objects by physically experimenting and adjusting their motor controls based on success or failure.

Deep Learning: The Neural Revolution

Deep Learning (DL) represents a quantum leap in AI capability, enabling systems to handle unstructured data like images, audio, and text with human-like accuracy. The core architecture of DL is the Artificial Neural Network (ANN), which is loosely modeled after the biological neurons in the human brain. The video explains the structure of a biological neuron—dendrites receiving signals, the cell body processing them, and the axon transmitting the output—and maps this to the artificial perceptron.

In an ANN, layers of artificial neurons are stacked. The ‘Input Layer’ receives the raw data (e.g., pixels of an image). This data is passed through one or more ‘Hidden Layers,’ where mathematical transformations occur using weights and biases. Finally, the ‘Output Layer’ produces the prediction. The ‘Deep’ in Deep Learning refers to the depth of these hidden layers. The more layers a network has, the more complex features it can extract.

For instance, early layers might detect simple edges, while deeper layers combine these edges to recognize shapes, and eventually, full objects like faces or cars. This hierarchical feature extraction is what makes DL indispensable for Computer Vision and Natural Language Processing (NLP).

Generative AI and Large Language Models (LLMs)

The course dedicates a significant section to the current frontier of technology: Generative AI. While traditional AI focuses on analyzing existing data to make predictions (discriminative models), Generative AI focuses on creating new data instances that mimic the training data. This capability has been popularized by Large Language Models (LLMs) like GPT-4 and Gemini. These models are trained on petabytes of text data, allowing them to understand context, nuance, and intent.

LLMs work by predicting the next logical token (word or character) in a sequence, but they do so with such complexity that they exhibit reasoning capabilities. The video emphasizes that GenAI is not just about chatbots; it’s a foundational technology that powers code generation, creative writing, and even synthetic data creation for training other models. The implications for productivity and software development are immense, shifting the role of engineers from writing code syntax to designing high-level prompts and architectures.

Essential AI Terminologies: Computer Vision and NLP

To navigate the AI landscape effectively, one must be fluent in its specific terminologies. The article highlights two major domains discussed in the video: Computer Vision (CV) and Natural Language Processing (NLP). Computer Vision is the field that enables computers to ‘see’ and interpret visual information from the world. It involves techniques for image classification, object detection, and facial recognition. It is the technology that allows a Tesla to identify a stop sign or a medical imaging system to spot a fracture.

Natural Language Processing (NLP) is the bridge between human communication and computer understanding. It encompasses tasks like sentiment analysis, language translation, and speech recognition. The evolution of NLP from simple keyword matching to the contextual understanding of Transformers (the architecture behind LLMs) marks one of the most significant achievements in computer science history. Understanding these domains is crucial because most real-world AI applications are hybrids, combining CV and NLP—for example, a system that can describe the content of an image in text.

The AI Engineer’s Roadmap: How to Start in 2026

Transitioning from theory to practice, the video outlines a strategic roadmap for students and professionals aspiring to build a career in AI. The path is structured and requires a strong foundation. The first step is mastering a programming language, with **Python** being the undisputed king due to its extensive library support (Pandas, NumPy, Scikit-learn). Proficiency in Python syntax and data structures is non-negotiable.

The second pillar is *Mathematics*. While you don’t need a PhD, a solid grasp of Linear Algebra (for data manipulation), Calculus (for optimization algorithms), and Probability/Statistics (for understanding data distributions) is vital. These mathematical concepts are the engine under the hood of every ML algorithm. Once the basics are in place, the roadmap suggests diving into **Machine Learning Algorithms**, starting with supervised learning techniques before advancing to neural networks.

The final stages involve **Deep Learning frameworks** like TensorFlow or PyTorch. These tools abstract away much of the complex math, allowing engineers to build and train neural networks efficiently. The roadmap concludes with **MLOps** and deployment—learning how to take a model from a Jupyter notebook and deploy it into a scalable production environment. This holistic approach ensures that learners are not just theoreticians but capable engineers ready for the industry.

Embracing the AI Revolution

As we stand on the precipice of an AI-driven future, the ‘AI Complete OneShot Course’ provides more than just technical knowledge; it offers a perspective on how to coexist and thrive with these technologies. Shradha Khapra’s lecture emphasizes that AI is not a replacement for human intelligence but an augmentation of it. By automating routine cognitive tasks, AI frees us to focus on higher-level creativity and strategic problem-solving.

Whether you are a student, a developer, or a curious enthusiast, the journey into Artificial Intelligence is one of continuous learning. The tools and concepts outlined here—from the basic regression model to the complex generative transformer—are the building blocks of the next decade’s innovations. The future belongs to those who understand these tools, and this guide serves as your first step toward that mastery.